When their values don’t change throughout the animation, CSS width / height animations can run on the Compositor, instead of being forced to run on the Main Thread.

A rather geeky/technical weblog, est. 2001, by Bramus

width or height no longer forces a Main Thread animation (in Chrome, under the right conditions)

Beware when manipulating the coordinates of the View Transition’s ::view-transition-group(*) pseudo. Depending on where you read those coordinates from, you might end up with layout jumps when writing them back. This post details the pitfalls and how to deal with them, unlocking more performant animations on the ::view-transition-group() pseudo along the way.

With @property now being Baseline Newly Available, I thought it’d be a good time benchmark the impact – if any – it has on the performance of your CSS. When starting to use a new CSS feature it’s important to understand its impact on the performance of your websites, whether positive or negative. With @property …

Continue reading “Benchmarking the performance of CSS @property”

This talk by Patrick Brosset is one of my favorite talks from this year’s CSS Day Conference. How do browsers actually recalculate styles when webpages change? Can the way you write CSS impact the speed of the recalculation process? In this talk, we’ll go through the details of how browser engines react to DOM changes …

:has() in use?@propertyHarry Roberts takes a look at some more technical and non-obvious aspects of optimising Largest Contentful Paint: Largest Contentful Paint (LCP) is my favourite Core Web Vital. It’s the easiest to optimise, and it’s the only one of the three that works the exact same in the lab as it does in the field (don’t …

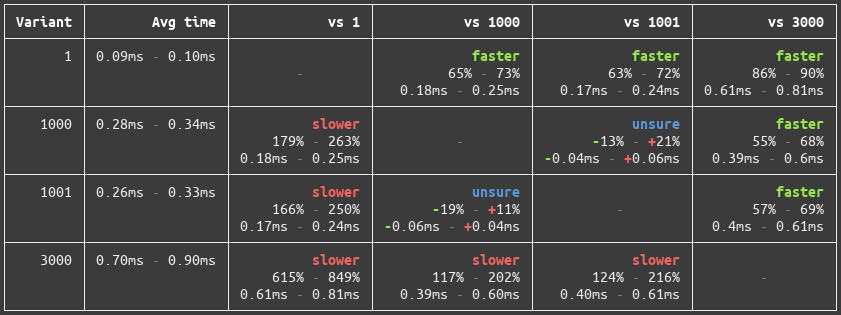

From the Polymer team: Tachometer is a tool for running benchmarks in web browsers. It uses repeated sampling and statistics to reliably identify even tiny differences in runtime. To compare two files, run it like so: npx tachometer variant1.html variant2.html Tachometer will open Chrome and load each HTML file, measuring the time between bench.start() and …

Continue reading “Tachometer – Statistically rigorous benchmark runner for the web”

The folks from builder.io set out to create a way to prevent Third-Party Scripts from blocking the main thread. The result is Partytown, which runs Third-Party Scripts Within a Web Worker. Partytown is able to sandbox and isolate third-party scripts within a web worker and allow, or deny, access to main thread APIs. This includes …

Continue reading “Partytown: Run Third-Party Scripts off the Main Thread in a Web Worker”

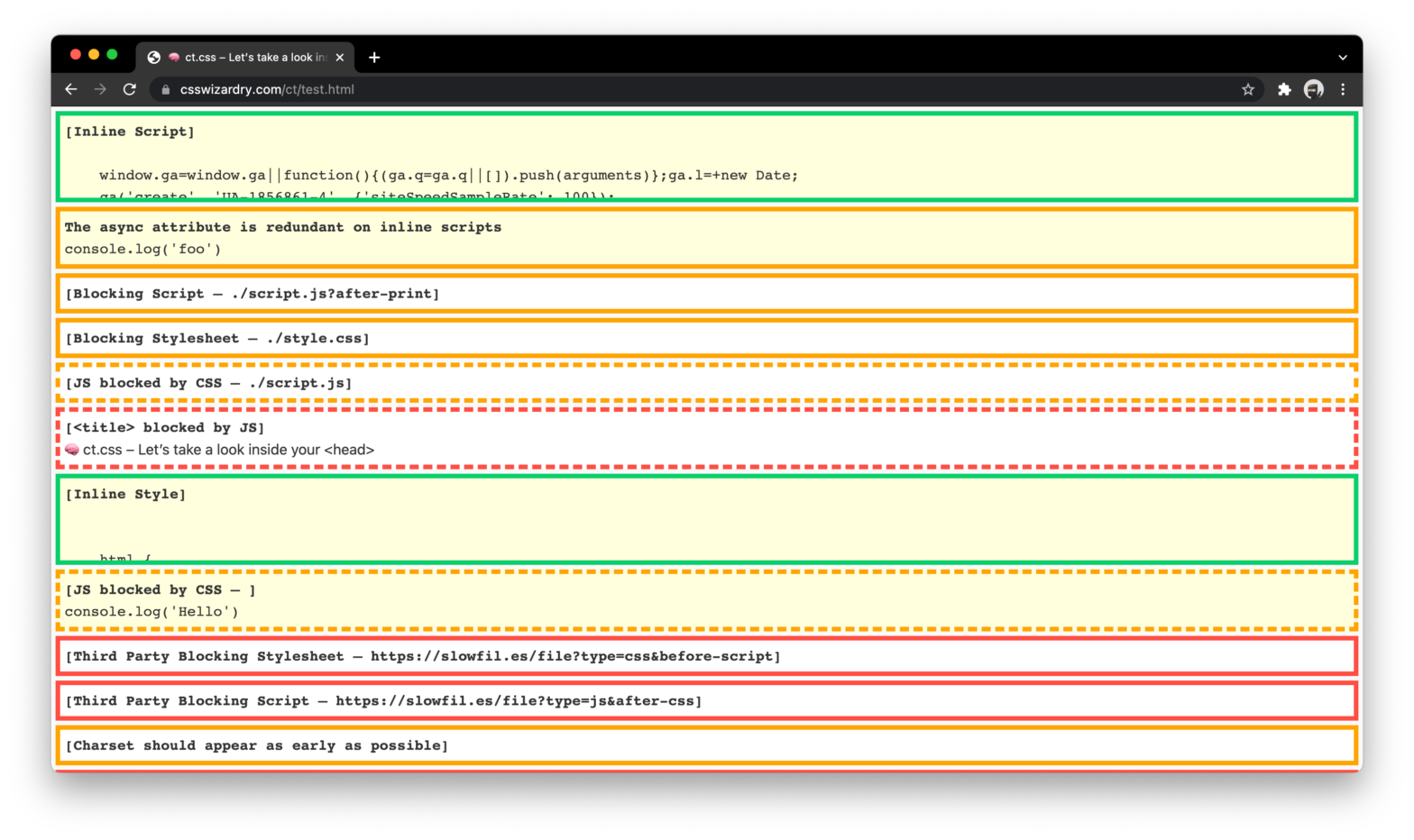

ct.css – Let’s take a look inside your <head>

Harry Roberts created a utility CSS file ct.css that x-rays your site’s <head>: Your <head> is the single biggest render-blocking part of your page—ensuring it is well-formed is critical. ct.css is a diagnostic CSS snippet that exposes potential performance issues in your page’s <head> tags. <link rel="stylesheet" href="https://csswizardry.com/ct/ct.css" class="ct" /> The CSS basically adds display: …

Continue reading “ct.css – Let’s take a look inside your <head>”