Harry Roberts takes a look at some more technical and non-obvious aspects of optimising Largest Contentful Paint: Largest Contentful Paint (LCP) is my favourite Core Web Vital. It’s the easiest to optimise, and it’s the only one of the three that works the exact same in the lab as it does in the field (don’t …

Tag Archives: optimization

Image Optimizer

Vector? Raster? Why Not Both!

Starting off with a 10.06MB SVG, Zach Leatherman tried several routes to reduce the weight of the hero image on the right side of the home page on JamStackConf.com. Eventually he settled on an approach where he layered a transparent WebP image on top of an SVG. Both layers were optimized individually, leaving only 78KB …

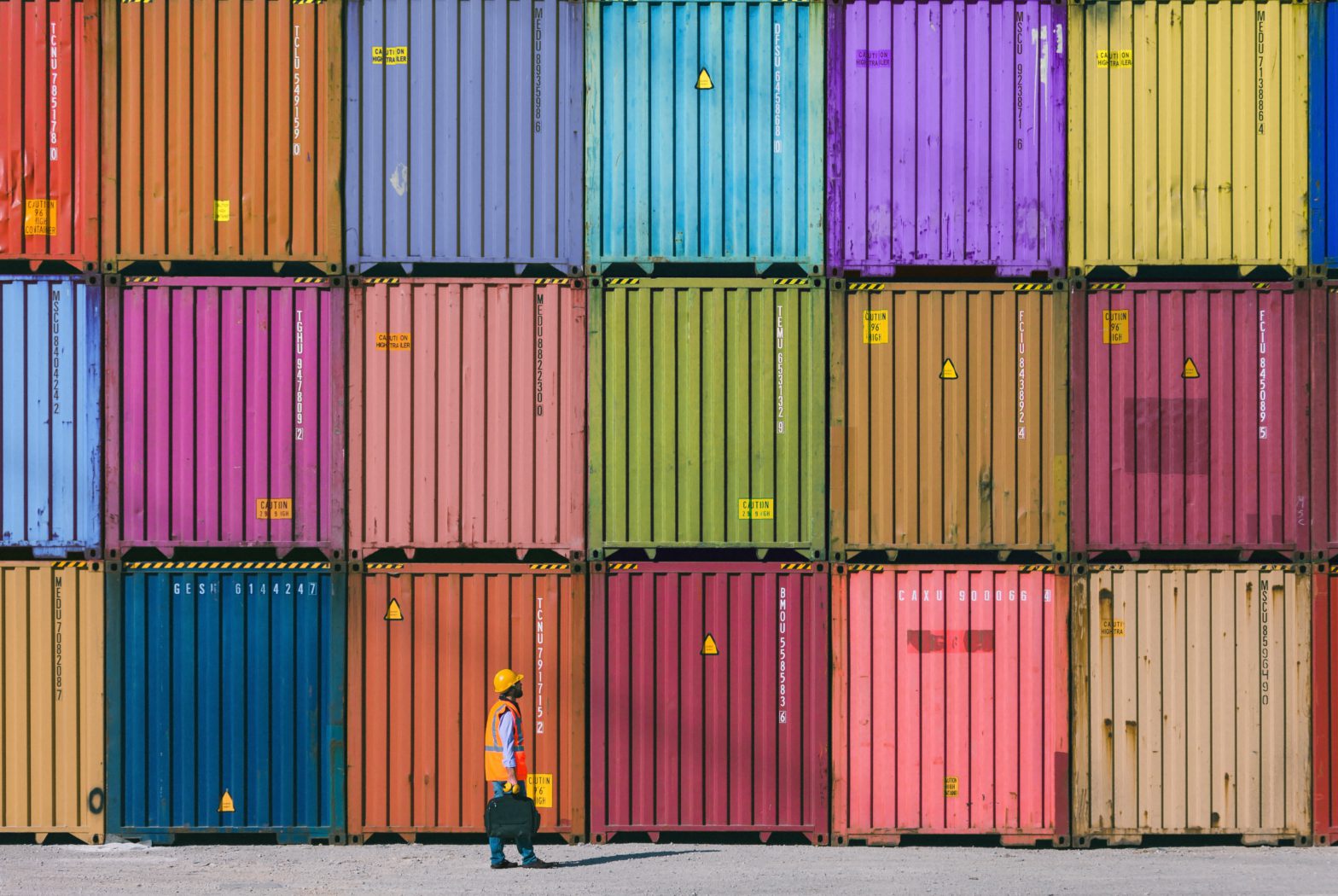

How to build smaller Docker images

When you’re building a Docker image it’s important to keep the size under control. Having small images means ensuring faster deployment and transfers. Wish I had found this post before I started playing with Docker, as it is packed with solid advice which I found out “along the way” myself. In short: Find the right …

Automatically compress images to your Pull Requests with this GitHub Action

The folks at Calibre have release a GitHub Action named “Image Actions” and I must say, it looks amazing insane: Image actions will automatically compress jpeg and png images in GitHub Pull Requests. Compression is fast, efficient and lossless Uses mozjpeg + libvips, the best image compression available Runs in GitHub Actions, so it’s visible …

Continue reading “Automatically compress images to your Pull Requests with this GitHub Action”

How Web Content Can Affect Power Usage

The Webkit blog, on how to optimize your pages so that they don’t drain the battery of your visitors their devices: Users spend a large proportion of their online time on mobile devices, and a significant fraction of the rest is users on untethered laptop computers. For both, battery life is critical. In this post, …

Essential Image Optimization

Essential Image Optimization is an free and online eBook by Addy Osmani: Images take up massive amounts of internet bandwidth because they often have large file sizes. According to the HTTP Archive, 60% of the data transferred to fetch a web page is images composed of JPEGs, PNGs and GIFs. As of July 2017, images …

Flipboard Engineering: 60fps on the mobile web

Since earlier this week Flipboard now is a website too. As they wanted to mimic their mobile apps, it would sport lots of animations. During their first tests, they found the DOM being too slow (although that’s not entirely true, see this video and its description for example). And then, an epiphany: Most modern mobile …

Continue reading “Flipboard Engineering: 60fps on the mobile web”

Supercharging your Gruntfile

In this article, we won’t focus on what numerous Grunt plugins do to your actual project code, but on the Grunt build process itself. We will give you practical ideas on: How to keep your Gruntfile neat and tidy, How to dramatically improve your build time, And how to be notified when a build happens. …

Tools for image optimization

Where possible, it’s best to try automating image optimization so that it’s a first-class citizen in your build chain. To help, I thought I’d share some of the tools I use for this. Not only contains a list of grunt plugins one can use, but also a few command line and online tools. I’ve been …