At the end of 2021, CSS-Tricks (RIP) asked a bunch of authors “What is the one thing people can do to make their websites better?”. This here, is my submission for that end-of-year series.

A rather geeky/technical weblog, est. 2001, by Bramus

GitHub is currently shipping ES2019-compatible code, and will soon ship ES2020 code. GitHub will soon be serving JavaScript using syntax features found in the ECMAScript 2020 standard, which includes the optional chaining and nullish coalescing operators. This change will lead to a 10kb reduction in JavaScript across the site. Wow, won’t that exclude a whole …

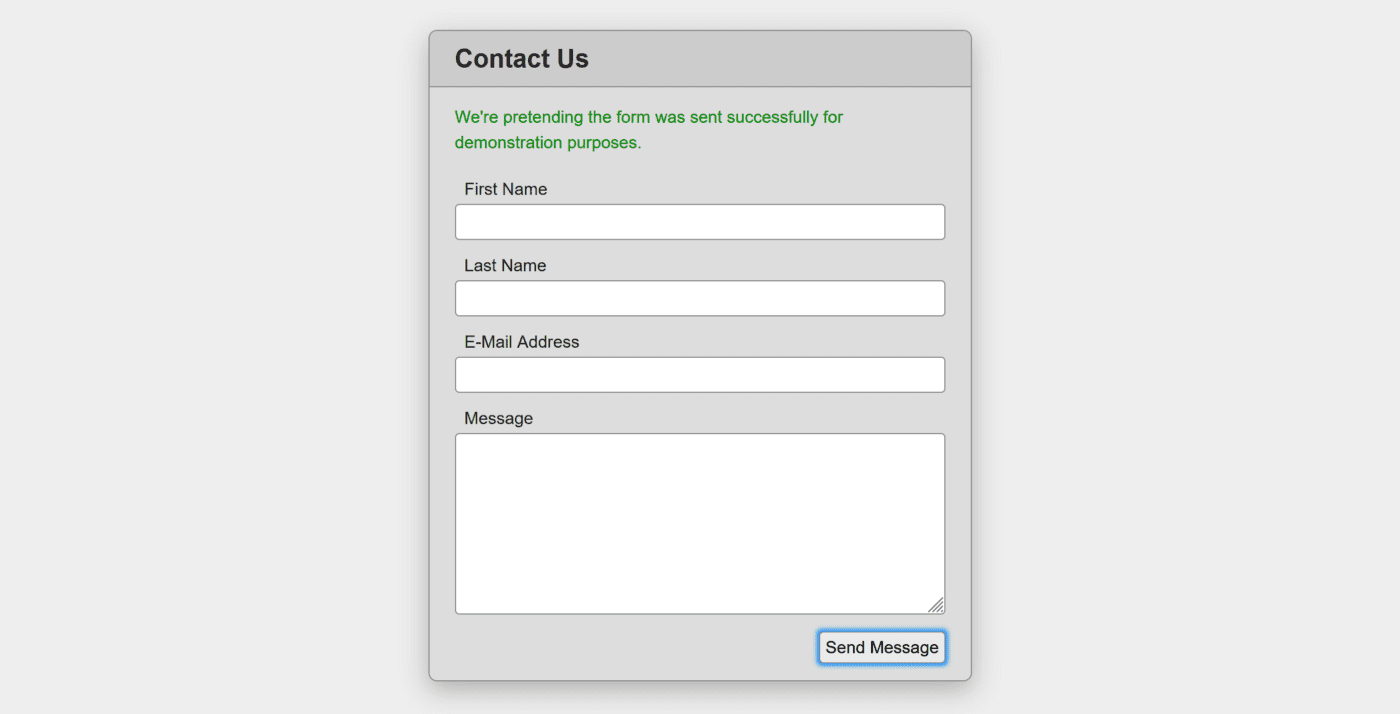

FormData

Jason Knights dissects a form that’s: not a form relies entirely on JS to handle the form submission He then takes his own approach that uses an actual <form> that can be submitted, along with some extra JS sprinkled on top to prevent a full reload. By using PROPER name=”” in the markup and a …

Continue reading “Using FormData And Enhancing Forms With JavaScript”

<details> with <details-utils>

Zach created a very handy Web Component that augments and wraps around a <details> element. He’s named it <details-utils>. This web component adds five new responsive-friendly enhancements to one or more <details> elements nestled inside: Force open/closed Click outside to close Close on esc Animate open/closed Toggle root element class Using attributes you can control …

Continue reading “Add Responsive-Friendly Enhancements to <details> with <details-utils>“

Chris put together two posts. A first one by Jim Nielsen who couldn’t update Safari on his Mom’s iPad when a site broke due to the use of the Optional Chaining Operator; and a second one by Eric Bailey with some nuance on the Evergreen part of “Evergreen Browsers”. As Chris wrote: But even browsers …

Continue reading “Not all Evergreen Browsers can be Updated”

In April 2021, Jeremy Keith gave the opening presentation at An Event Apart Spring Summit 2021. In true Jeremy-style this talk starts off with space and the early days of the web, to eventually bring us to the present day. Watch this talk (or read the transcript). And then watch it again. It’s packed with …

Earlier this year Dave Rupert spoke at An Event Apart’s Spring Summit with a talk on Web Components: It’s the year 2021. Lots of us are building our websites and apps with components and design systems, perhaps leveraging a JavaScript framework to help glue all the pieces together. The web has matured in the last …