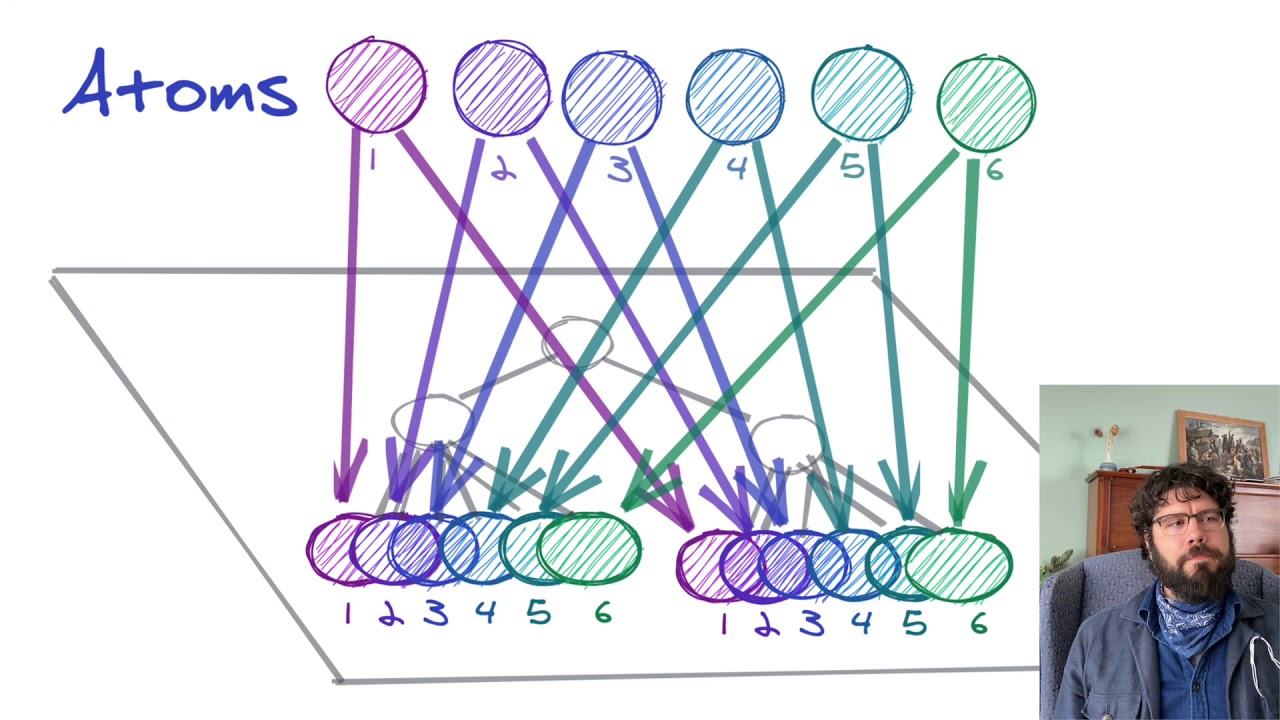

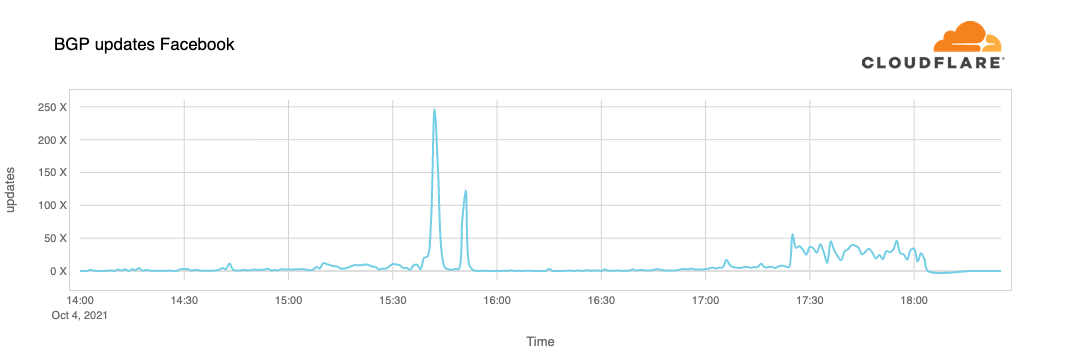

Interesting read on how the folks at Cloudflare saw Facebook go down last night, and how it impacted traffic on their end. The Internet is literally a network of networks, and it’s bound together by BGP. BGP allows one network (say Facebook) to advertise its presence to other networks that form the Internet. As we …

Continue reading “Understanding How Facebook Disappeared from the Internet”