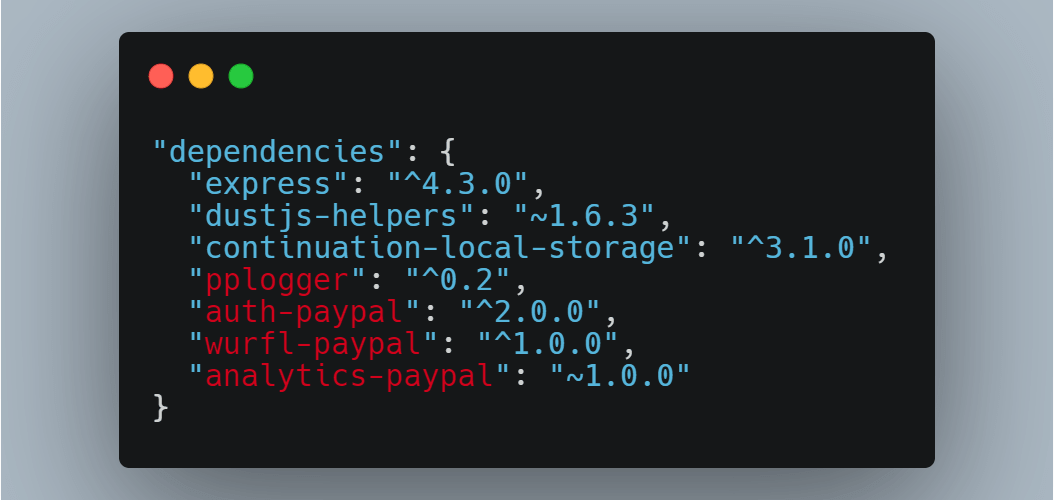

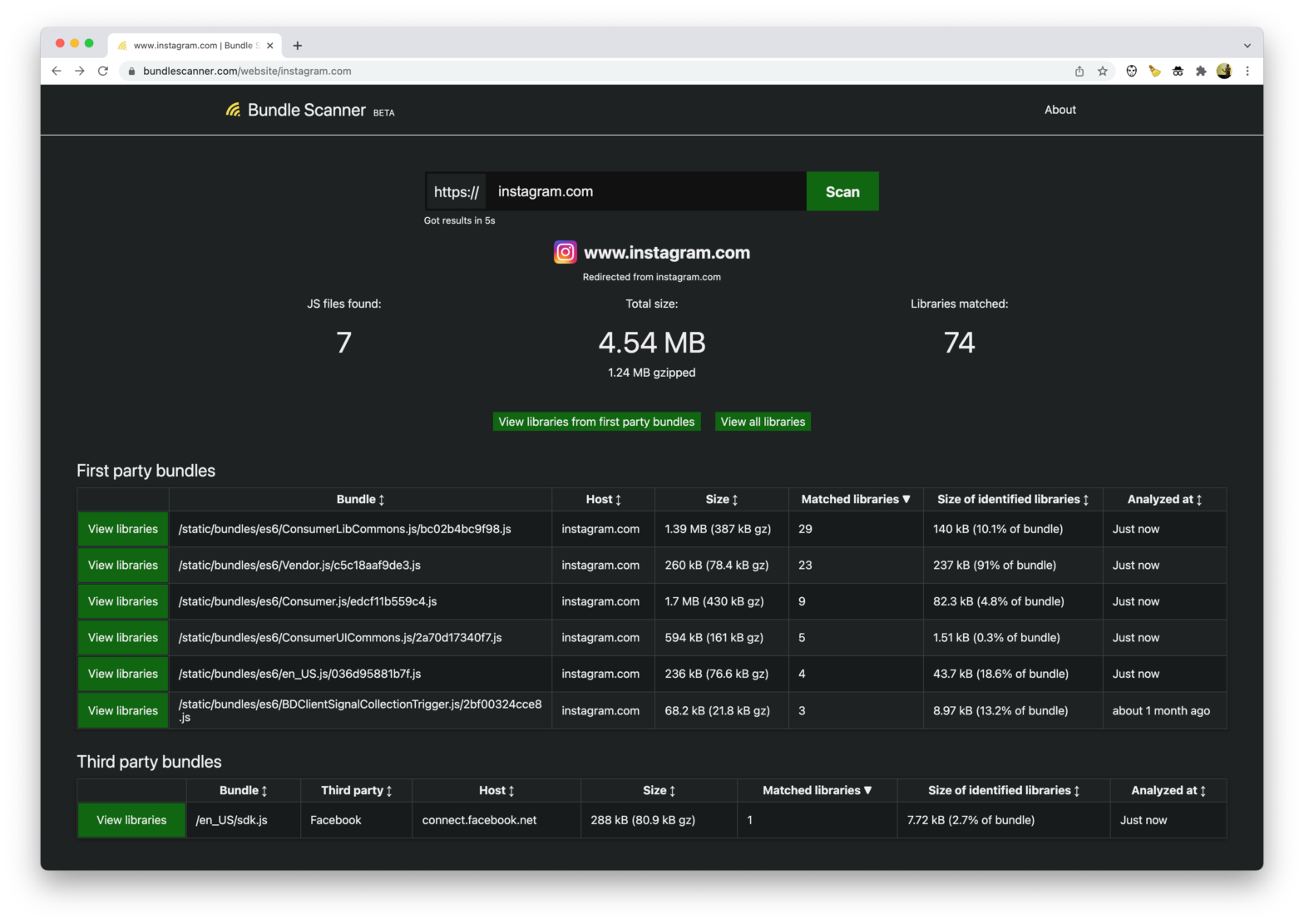

Bundle Scanner identifies which npm libraries are used on any website. It downloads every Javascript file from a URL and searches through the files for code that matches one of the 35,000 most popular npm libraries. The scanning itself works in a pretty ingenious way: When a user requests to scan a website, Bundle Scanner …

Continue reading “Identify which NPM libraries are used on a website with Bundle Scanner”